Sparse Multivariate Analysis via L1 Regularization

HIROSE, Kei

Degree: PhD (Functional Mathematics) (Kyushu University)

Research interests: Sparse estimation, L1 regularization, Multivariate Analysis

Recently, the analysis of big data has becoming more and more important. Although the data volumes are increasing, most of the data values can be meaningless. Therefore, it is important to extract meaningful information from the big data. The sparse estimation, such as L1 regularization, is one of the most efficient methods to achieve this. The sparse estimation makes most of the parameters exactly zeroes. The meaningful variables correspond to the nonzero parameters. A remarkable feature of the L1 regularization is that even if the number of parameters is several millions, it takes only several minutes to compute the solution. In addition, the L1 regularization has many good statistical properties. For these reasons, many statisticians are interested in the L1 regularization.

I am interested in multivariate analysis via L1 regularization. Multivariate analysis investigates a relationship among variables by some procedure such as aggregating several variables. The multivariate analysis has been widely used for several tens of years. I am interested in factor analysis, which is one of the most popular multivariate analyses. Conventionally, the factor analysis has been used in psychology and social sciences, but recently it has been used in life science and machine learning. The factor analysis has been becoming more and more important in many research areas. In the following, I introduce two recent results related to factor analysis.

(1) Sparse estimation in factor analysis

An interesting fact of the factor analysis is that the factor loadings have a rotational indeterminacy, that is, the loading matrix is not unique. In factor analysis, we estimate parameters by using rotation technique. This kind of technique may not be used in any other statistical models. The rotation technique has been widely used in factor analysis for more than 50 years.

I applied the L1 regularization to factor analysis model and compared the L1 regularization with the rotation techniques.

Then, I found a very interesting fact: the regularization is a generalization of the rotation technique, and the regularization can achieve sparser solutions than the rotation technique. Furthermore, I developed an efficient algorithm for computing the entire solutions, and made an R package fanc (https://cran. r-project.org/web/packages/fanc/index.html). There exists papers that use the fanc package.

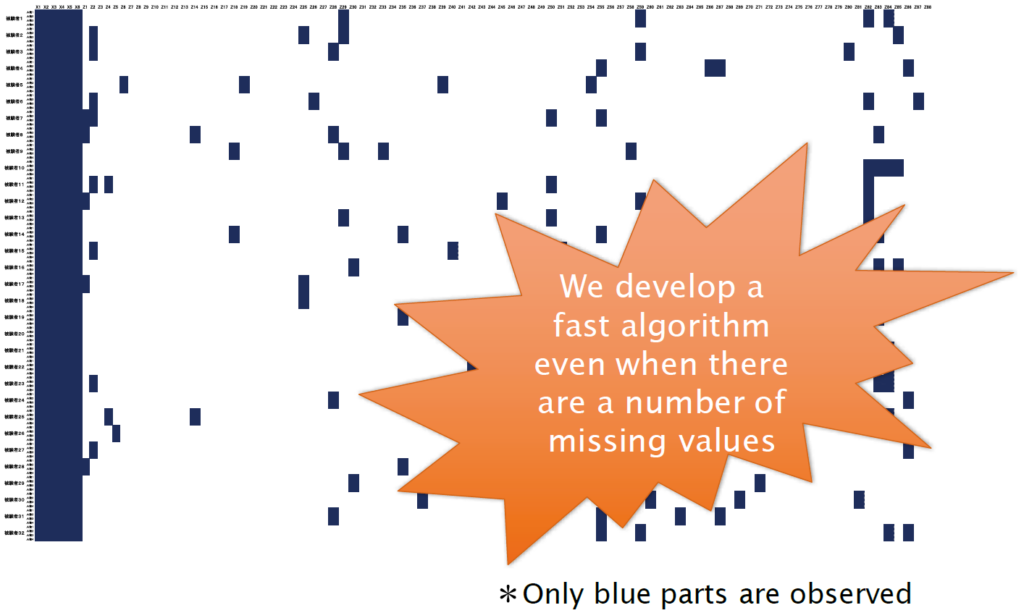

(2) Maximum likelihood estimation in factor analysis for a large number of missing values

In some cases, the data values can be missing. For example, when a questionnaire asks a research participant about a feeling towards another person, many questions are prepared in order to investigate their impressions, using a wide variety of personalassessment measures. However, answering all of the questions may cause participants fatigue and inattention. In order to gather the high-quality data, the participants may be asked to select just a few of questions; this leads to a large number of missing values.

When the data values are missing, we can use a standard EM algorithm to estimate parameters. However, when a majority of data values are missing, the ordinary EM algorithm is extremely slow. In order to handle this problem, I modified the EM algorithm. I found that the modified EM algorithm is several hundreds or thousands times faster than the ordinary EM algorithm.